Nobody ever looked at a Terraform[terraform] pipeline and called it "Dave, our Infrastructure Engineer." Nobody named their CI/CD[cicd] workflow "Jenkins the QA Lead." You wrote a cron job[cron] that rotates logs and you called it a cron job, because that's what it is.

It does a specific thing. The fact that it replaced something a human used to do manually doesn't make it a digital human.

So why, the moment we add natural language to the interface, does the entire industry lose its mind and start handing out job titles to shell scripts?

None of this is new

The AI engineering community keeps inventing terminology for patterns that already exist. Most of what gets branded as a breakthrough is something we've been doing for years, just with a different interface.

Context engineering is the hot one right now. Carefully curate what information goes into your LLM[llm] prompt so you get reliable, relevant outputs. Strip out noise, keep signal, structure it so the model can use it.

That's data wrangling. Every data engineer who's ever cleaned up a messy CSV knows the drill: trim the empty columns, normalise the date formats, deduplicate the rows, pipe the result into the next stage. The only difference is the destination. Instead of feeding clean data into a dashboard or a database, you're feeding it into a prompt. The discipline is identical. Garbage in, garbage out.

Agent orchestration: scheduling agents, managing execution order, handling failures, routing outputs. That's a workflow engine. That's Airflow[airflow]. That's Step Functions[stepfunctions]. That's make targets with dependencies. We've had job schedulers since the 1970s. The fact that the jobs now speak English doesn't change what a DAG[dag] is.

More examples of renamed patterns

RAG[rag]: search an index, get relevant documents, stuff them into the prompt alongside the question. That's Elasticsearch[elasticsearch] with extra steps. Every search feature you've ever built does the same thing: query, retrieve, present. RAG just presents to a model instead of a user.

Tool use and function calling: the model "decides" to call an external API based on the conversation. That's a webhook. That's an integration. Your agent picking which tool to call is a glorified switch statement with fuzzy matching. We've been writing API routers for decades.

Prompt chaining: output of one LLM call becomes input to the next. That's Unix pipes. cat file | grep pattern | sort | uniq. The pipe character is doing the same job as your agent framework's "chain" abstraction, just without the npm dependency.

Multi-agent systems: multiple specialised agents, each handling one part of the problem, passing results between them. That's microservices[microservices]. That's the Unix philosophy: do one thing well, compose with others. Having multiple model instances critique each other's outputs does produce measurably better results, and that's worth building on. But the value comes from the architecture pattern, not from naming each instance after a colleague.

Guardrails: validating what goes into and comes out of the model, filtering harmful content, checking outputs against rules. That's input validation. That's sanitisation. Every web developer who's ever escaped HTML or parameterised a SQL query has implemented guardrails. We just called it "not getting hacked."

Agent loops: try something, check if it worked, adjust, try again. That's a while loop with a conditional break. It's retry logic with backoff. PID controllers[pid] have been doing this in industrial systems for a century. The reasoning engine inside the loop is new, and that matters. But nobody ever gave their PID controller a job title.

None of these patterns are useless. They matter. The point is that dressing them up in novel terminology obscures the fact that we already know how to think about these problems. Structure your inputs, handle your errors, test your outputs, compose small pieces into larger systems. The new terminology doesn't help engineers build better systems. It helps conference speakers sell tickets.

We've seen this convergence before

Everyone who's worked in tech long enough remembers watching job titles dissolve and reform as the infrastructure underneath them shifted.

Mainframes gave way to on-premise servers. Bare metal[baremetal] gave way to virtualisation[virtualisation]. Private clouds gave way to public clouds. Each transition didn't just change the technology, it changed who did what.

Think about the virtualisation era. You had the networking guy. The storage guy. The DBA[dba]. The virtualisation guy. Each was a specialist with deep, narrow knowledge. The networking person could configure VLANs[vlan] in their sleep but never touched a SAN[san]. The storage person lived in their own world of LUNs[lun] and RAID[raid] arrays. Clear boundaries, clear titles, clear lanes.

Then VMware[vmware] happened, and those boundaries started blurring. The virtualisation admin suddenly needed to understand networking (vSwitches, port groups), storage (datastores, VMDK provisioning), and enough about operating systems to troubleshoot guest issues. Roles that used to be four people started converging into one or two people with broader responsibilities.

Public cloud accelerated this dramatically. Platform engineering emerged, and everyone became the networking, database, orchestration, and operating system expert. Not because people got smarter overnight, but because the domains themselves shrank.

You no longer needed full DBA experience to deploy and manage RDS[rds]. You needed to understand the offering: which engine, which instance class, backup retention, read replicas. The depth collapsed into breadth. With S3[s3], the storage specialism went away almost entirely: you just needed to know bucket policies and lifecycle rules.

The pattern repeated across every domain. VPCs[vpc] and security groups instead of physical switches and firewalls. Managed databases instead of hand-tuned Oracle instances. IAM[iam] policies instead of Active Directory[ad] on bare metal in a cupboard. A managed Kubernetes[k8s] service instead of hiring a dedicated team. Monitoring went from Nagios[nagios] on a VM you maintained yourself to a SaaS dashboard.

At every stage of this convergence, the roles changed but the people were respected. Nobody called their Terraform module "Dave the Infrastructure Engineer." Nobody branded their CI pipeline as a "Digital Platform Team." The automation did what it did, and the humans adapted by learning broader skills.

The job titles evolved honestly. "Sysadmin" became "DevOps Engineer" became "Platform Engineer" became "Cloud Engineer." Each shift acknowledged that the work had changed while respecting that a human was still doing it. The tools got better. The humans grew with them.

"We automate toil so humans can focus on the interesting problems" was the framing, and it worked because it was honest. The tooling was respected as tooling, the humans were respected as humans, and adoption happened naturally because people could see the value without feeling threatened.

That's the model AI tooling should follow. Instead, we skipped straight to giving the automation a job title.

Where the persona instinct came from

To be fair, the "you are an expert X" pattern didn't start as nonsense. It started as a useful technique with real evidence behind it.

LLMs are trained on vast amounts of text written by people in different roles, contexts, and levels of expertise. When you tell a model "you are a senior security engineer reviewing this code," you're not casting a spell. You're narrowing the probability distribution. The model has seen millions of tokens[tokens] written by security engineers, and the role prefix steers it toward that region of its training data. The outputs become more focused, more domain-appropriate, more likely to surface the patterns you care about. Early prompt engineering[prompteng] research suggested this: role prompting can measurably improve output quality for specific tasks.

That had real evidence behind it, particularly with earlier models. GPT-3.5 and early GPT-4 responded noticeably to role framing because those models benefited more from explicit steering. But the picture has gotten murkier as models have improved. With stronger models, the gap between "you are a senior security engineer" and just describing the task you want done narrows considerably. It's also hard to argue that the probability distribution for "Scrum Master" is meaningfully different from "Project Manager" when both are reviewing the same backlog.

The labs themselves seem to have noticed. OpenAI, Anthropic, and Google have all moved toward task-oriented agent design: skills, tools, structured workflows. Even when they use system-level definitions, those definitions describe what the agent should do, not what job title it holds. A brief "you're an expert at frontend accessibility" might still help. But writing a full page about what a frontend designer is, their career history, their preferred methodologies, their communication style, that can actively steer the model into a worse probability distribution than simply saying "review this component for accessibility issues." Being specific about the task beats being specific about the persona.

And that's where things went sideways. Instead of recognising that the technique had limits, people doubled down.

The leap from "a role prefix helps the model focus" to "let's build 21 named personas with backstories and have them debate each other" is enormous. Somewhere along the way, a useful prompting technique became a design philosophy, and the design philosophy became a product category. People who'd read a few blog posts about prompt engineering decided that if "you are a QA engineer" improves code review, then surely a framework with a named QA persona, a Product Manager persona, an Architect persona, and a Party Mode where they all argue with each other must be even better.

It isn't. One model wearing different hats, pretending to disagree with itself. Real cross-functional discussion involves competing priorities, hard-won domain expertise, and the kind of context you can't fit in a system prompt[systemprompt]. Simulating that isn't collaboration, it's theatre. I've written before about how abstraction layers hold newer models back, and this is a textbook case. More ceremony doesn't mean better outputs. More personas don't mean better analysis. Whatever value the role prefix once had, everything bolted on top is set dressing.

And there's an irony here. The people building these persona frameworks often used AI to generate the persona descriptions themselves. Pages of AI-generated slop defining what a "Product Manager agent" should do, written in that unmistakable ChatGPT house style: "As a seasoned product management professional, I leverage my extensive experience to..." I've touched on this pattern before, calling it "vibe specifying": using AI to generate impressive-looking documents that have never been tested against reality. The persona frameworks are vibe specifying applied to the tools themselves.

The rebranding problem

These frameworks aren't selling a technique. They're selling an org chart. Some ship 20+ named personas, each with their own slash command, backstory, and simulated expertise. Strip the branding away and what you're looking at is a system prompt with a role prefix, the same useful technique from two years ago, now dressed up as a methodology.

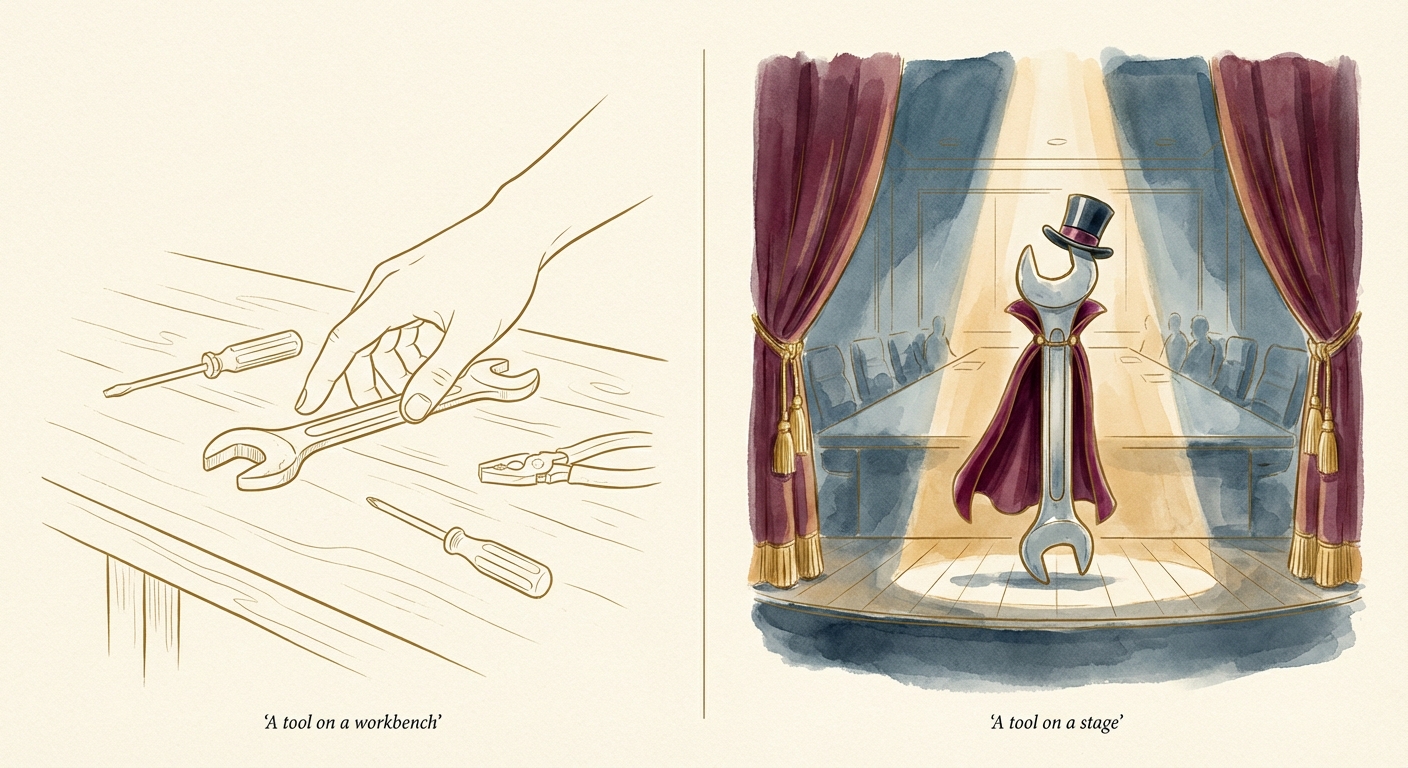

The biggest thing that changed with LLMs is the interface. Instead of YAML and bash, the automation accepts and produces natural language. That's useful, and it matters. But the underlying principle is identical to every other form of automation: you're automating a task, not replicating a person. The delegation model treats AI agents as digital workers sitting below you in a hierarchy. The persona frameworks are that model made literal: an entire simulated workforce defined in markdown.

Turning us against each other

The persona theatre isn't limited to open-source frameworks. It's gone corporate. Salesforce publicly dismissed Microsoft's AI agents as not being real agents. Google launched "Agentspace." Every major vendor is fighting to own the definition, as if the label itself is what matters.

Look underneath the branding. Microsoft's Copilot "agents" are, in many cases, Power Automate[powerautomate] flows: the same workflow automation that's been available to every Microsoft 365 tenant for years. Before "AI agents," Microsoft tried to mainstream this same capability as "Citizen Development"[citizendev], the idea that non-technical people could build their own automations with low-code tools. It never really took off. Most people didn't automate their workflows when it was called Power Automate. Rebrand it as an AI agent, and suddenly it's transformational. Same capability, new name tag.

And the adversarial framing is spreading beyond the vendors. Browse any discussion about AI and you'll find people in different roles pointing at each other. Non-technical staff arguing that developers are about to be automated away. Technical staff insisting that project management is "just a prompt." Everyone reaching for AI as proof that someone else's job is the expendable one, while their own work is too nuanced, too human, too important to reduce.

This is what the theatre produces. Not collaboration, not curiosity, not people exploring what they could build with better tools. Just fear, turning colleagues into competitors.

The dignity problem

This might sound like a semantic complaint. It isn't.

When you create a "Product Manager agent" or an "Architect agent," you're implicitly saying that person's job can be reduced to a system prompt. A real product manager brings years of domain knowledge, organisational context, political awareness, and intuition that can't be captured in a markdown file. A real architect makes trade-offs based on experience, constraints they've hit before, failures they've learned from. Boiling that down to a persona in a framework isn't just technically inaccurate, it's disrespectful. I've talked about this dynamic in the context of individuals who anthropomorphised models, treating them like junior developers who just needed clearer instructions. The persona frameworks industrialise that same mistake into a product.

And people notice. When someone who's been a QA engineer for a decade sees a GitHub repo proudly advertising an AI agent that supposedly does their job via a slash command, the message they receive isn't "AI will help you." The message is "you're a prompt."

That's not a perception problem. That's the actual message being sent.

A note for leaders

If you're in a leadership position and you've been excited about these frameworks, this is worth pausing on.

Everyone in tech is thinking about their future right now. AI is moving fast, roles are shifting, and there's genuine uncertainty about what work looks like in two or three years. That's already a lot to navigate. Into this environment, some frameworks arrive announcing they've built your "digital team": the whole org chart recreated in markdown, ready to replace the meeting you were in this morning.

When your team pushes back on AI adoption, the instinct is to label it resistance to change. But resistance rooted in fear is fundamentally different from resistance rooted in laziness. When someone sees their job title attached to a system prompt, they feel existential threat, not technophobia. Dismissing that as "they just need to get on board" is a failure of empathy, not a change management problem.

Leaders who lack self-awareness overestimate their communication clarity and underestimate team stress. A leader who thinks naming AI agents after job roles is "just a naming convention" is filtering out the emotional signal from their team. Acknowledging that signal would mean admitting they hadn't thought it through. Your excitement about the tool is not your team's experience of it.

The environment determines the behaviour. An environment where automation is named after people's job titles makes people feel threatened, so they disengage. An environment where automation is honestly named for what it does, a scheduled task, a workflow, a check, makes people feel respected, so they lean in.

This matters beyond morale. Google's Project Aristotle[aristotle] found that psychological safety[psychsafety] was the strongest predictor of team effectiveness, more than composition, seniority, or individual talent. People need to feel they won't be punished or humiliated for speaking up. Naming an AI agent after someone's role signals that the role is automatable, which makes people less likely to engage with the tool honestly. They won't tell you when the AI gets things wrong, because pointing out the bot's limitations feels like arguing for their own survival rather than contributing to a technical discussion.

There's a difference between compliance and commitment. Threatening framing gets you compliance: people will use the tools because they have to, not because they want to. You lose the very engagement and creativity that AI adoption needs to succeed. The naming conventions you choose for your AI tooling are not a trivial detail. They are a signal to your team about whether you see them as people or as prompts.

People aren't resisting AI because they're luddites[luddites]. They're resisting because the people promoting it are being careless with their dignity.

Just call it what it is

LLMs are a genuinely powerful form of automation. They deserve better than the costume party the ecosystem is throwing them. The automation works. The patterns are sound. The problem was never the tooling, it was pinning a name badge on it.

The convergence era taught us something the current AI discourse keeps forgetting. When managed databases arrived, I was relieved. I'd always been hesitant about full database administration, and not having to master it all wasn't a loss: it was freedom to focus on problems I actually cared about. Most people didn't mourn the toil. They grew. Access to knowledge is being democratised at a pace we haven't seen before. If you've spent years working with complex data structures, that thinking transfers wherever you want to take it. If you still love what you do, you can now do it at a scale that wasn't possible before.

Cloud was never about where you do computing. It was about how you do computing. When infrastructure costs collapsed, entirely new industries emerged because the barrier to starting something dropped by orders of magnitude. AI is the same kind of shift. The question isn't which jobs survive. It's how many new ones get created when the cost of knowledge drops the way infrastructure costs did. That era made platform engineers out of sysadmins. This one will create roles we haven't named yet, and that's worth being excited about, so long as we let people grow into them instead of telling them they've been automated.

Call it what it is. Respect the tool for what it does. Respect the humans for what they do. The people you're trying to help might actually want to use it.

Infrastructure-as-code tool that defines cloud resources in configuration files, allowing you to version and automate infrastructure the same way you version application code.

↩Continuous Integration/Continuous Delivery. Automated pipelines that build, test, and deploy code whenever changes are pushed.

↩A scheduled task on Unix/Linux systems that runs automatically at set intervals. Named after the cron daemon, which has been part of Unix since the 1970s.

↩Large Language Model. AI systems like GPT and Claude, trained on massive text datasets to generate and understand natural language.

↩Apache Airflow, an open-source platform for scheduling and orchestrating data workflows. Originally built at Airbnb.

↩AWS Step Functions, Amazon's managed service for building visual workflow automations with built-in error handling and retry logic.

↩Directed Acyclic Graph. A structure where tasks flow in one direction with no loops, used to define execution order in workflow and pipeline systems.

↩Retrieval-Augmented Generation. A pattern where relevant documents are fetched from a search index and included in the LLM's prompt to give it factual context.

↩An open-source search and analytics engine, widely used for full-text search, log analysis, and real-time data exploration.

↩An architectural pattern where applications are built as a collection of small, independently deployable services, each responsible for one specific function.

↩Proportional-Integral-Derivative controllers. Feedback loops used in industrial automation to maintain a target value (temperature, speed, pressure) by continuously measuring error and adjusting inputs.

↩Physical servers with no virtualisation layer. You manage the actual hardware directly: CPU, RAM, disks, and network cards.

↩Running multiple virtual computers (VMs) on a single physical machine, sharing the underlying hardware. Each VM behaves like an independent server.

↩Database Administrator. A specialist responsible for managing, tuning, backing up, and securing databases.

↩Virtual Local Area Networks. A way to segment network traffic into isolated groups without needing separate physical infrastructure.

↩Storage Area Network. A dedicated high-speed network connecting servers to shared block storage devices.

↩Logical Unit Numbers. Identifiers for individual storage volumes presented by a SAN to connected servers.

↩Redundant Array of Independent Disks. A method of combining multiple physical drives for improved performance, redundancy, or both.

↩A virtualisation company whose products (vSphere, ESXi) became the industry standard for running multiple virtual servers on a single physical machine.

↩Amazon Relational Database Service. A managed database offering that handles backups, patching, scaling, and replication for engines like PostgreSQL and MySQL.

↩Amazon Simple Storage Service. Cloud object storage accessed via API, used for everything from hosting static websites to storing massive datasets.

↩Virtual Private Clouds. Logically isolated network environments within a public cloud platform, giving you control over IP ranges, subnets, and routing.

↩Identity and Access Management. The system controlling who can access what in a cloud environment, through users, roles, and permission policies.

↩Microsoft's directory service for managing users, computers, and access policies in corporate networks. Now largely succeeded by Microsoft Entra ID in cloud environments.

↩An open-source container orchestration platform that automates deploying, scaling, and managing containerised applications. Originally built by Google.

↩An open-source infrastructure monitoring tool that was the standard for server and service health alerting before SaaS monitoring platforms took over.

↩In LLM context, the smallest units of text a model processes. Roughly three-quarters of a word on average. More tokens processed means more compute cost.

↩Hidden instructions given to an LLM before the user's conversation begins, defining its behaviour, constraints, and persona. The user typically doesn't see these.

↩The practice of crafting inputs to LLMs to produce better, more consistent, and more relevant outputs.

↩The belief that you can speak up, ask questions, or admit mistakes at work without being punished or embarrassed. A concept from organisational psychology, popularised by Harvard professor Amy Edmondson.

↩Originally a 19th-century movement of English textile workers who smashed machinery they believed threatened their livelihoods. Now used as shorthand for anyone who resists new technology.

↩Google's internal research initiative (2012-2015) that studied 180 teams to understand what makes them effective. Its key finding: psychological safety mattered more than team composition, seniority, or individual talent.

↩Microsoft's low-code workflow automation platform, part of the Microsoft 365 and Power Platform suite. Allows building automated workflows between applications and services using a visual designer.

↩A strategy where non-technical employees build applications and automations using low-code or no-code platforms, reducing dependence on IT departments for routine business tools.

↩