I was watching a Dario Amodei[dario] interview on the Dwarkesh Patel[dwarkeshpatel] podcast from 2023, talking about what might cause AI scaling to hit a wall. Not a hardware limit or a data shortage, but something about the loss function[lossfunction] itself: that when you train on next-word prediction[nextword], the model over-focuses on surface-level patterns and doesn't pay enough attention to the rare tokens[tokens] that carry the real reasoning.

"If you really want to learn to program at a really high level, it means you care about some tokens much more than others. And they're rare enough that the loss function over-focuses on the appearance, and doesn't focus on the stuff that's really essential."[source]

That was two years ago. Since then, Anthropic has become one of the leading AI labs in the world. But it's not the company's trajectory that stuck with me, it's this specific observation. Dario was talking about model training, not organisations. But I couldn't stop hearing it as a description of what's happening with AI adoption right now. Everyone optimising for the visible, surface-level stuff. The appearance of progress. While the essential, harder work gets drowned out.

That thought sent me looking for historical parallels, and I landed on one that was almost too good.

Twenty-seven million people watched the lights come on

In 1893, twenty-seven million people visited the Chicago World's Fair[worldsfair] to watch electricity perform. Giant searchlights swept the grounds, reportedly visible sixty miles away. Towers and columns blazed with thousands of bulbs. Murat Halstead[halstead], writing for Cosmopolitan (which was a serious affairs journal back then, not what you're thinking), described "the earth and sky were transformed by the immeasurable wands of colossal magicians."

That same year, less than half a percent of Chicago's population had electric lights in their homes.

Not lies, exactly. The technology was real. The lights worked. The spectacle was genuine. But the distance between what electricity could do on a stage and what it could do in someone's life was enormous.

The steam engine with a new label

Here's the part of the electricity story that doesn't make it into the inspirational conference talks.

When factories first adopted electricity, most of them did something entirely predictable: they ripped out the steam engine and dropped an electric motor in its place. Same building. Same layout. Same system of shafts, pulleys, and belts distributing power from a central point. Same multi-storey design dictated by the need to keep machines close to the drive shaft.

The result? Almost no productivity gain. For over thirty years, from the 1880s through the 1910s, electrification was visible everywhere and in the productivity statistics nowhere. The economist Paul David[pauldavid] documented this in his landmark 1990 paper, drawing a direct line to Robert Solow's[solow] famous observation about computers: "You can see the computer age everywhere but in the productivity statistics."

The gains only came in the 1920s, when manufacturers finally stopped treating electricity as a drop-in replacement and started redesigning from the ground up. Unit drive[unitdrive]: every machine gets its own motor. No more shafts. No more belts. Factories could be single-storey, laid out to follow the flow of production rather than the flow of mechanical power. Different divisions could operate independently. Machines that weren't running didn't waste energy.

Manufacturing labour productivity doubled, from 1.3% annual growth to 3.1%. Electrification accounted for half of all productivity growth in manufacturing during that decade.

The technology hadn't changed. What changed was that people stopped overlaying the new system on top of the old one.

Sixty miles of searchlights

Silicon Valley is running the 1893 World's Fair on repeat. Every week brings another demo, another launch, another agent framework that can do something extraordinary on stage. And like the fair, none of it is fake. The models are genuinely capable. The demos genuinely work. The technology is real.

But the distance between a demo and a deployment is the same gap that separated the fairground searchlights from the 99.5% of Chicago homes sitting in the dark.

We've built an entire ecosystem around the spectacle. Startups raise rounds on demo videos. "SaaS killers" launch on Twitter with a single prompt and a screen recording. Conference keynotes feature multi-agent orchestration systems with named personas debating each other. The audience watches, impressed, and thinks: this is the future.

It is. In the same way that the 1893 World's Fair was the future.

I should be fair here, though. The spectacle matters. Those twenty-seven million visitors went home and wanted electricity. The World's Fair created demand, attracted investment, and built the political will that funded rural electrification programmes decades later. Demo culture serves a real function: it shows people what's newly possible and drives the capital that funds serious engineering work.

The problem isn't the demos. It's mistaking the demo for the deployment. The World's Fair lit up a city for six months. It took another thirty years before the technology actually changed how factories worked. The spectacle and the substance aren't opposing forces, but they're not the same thing either, and right now the ecosystem is heavily weighted toward one side.

The PoC on someone's laptop

The gap between spectacle and substance shows up differently depending on where you sit. For startups and smaller teams, the distance between demo and production can be genuinely short. Different constraints, different risk tolerances, different definitions of "ready." Some of the best AI-native tools started as exactly the kind of weekend prototype that looks unserious from an enterprise boardroom.

But if you're deploying inside an enterprise, you've lived through this pattern long before AI arrived.

You can build an impressive product in a weekend. A beautiful website. A clever integration. A working prototype that genuinely solves a problem. On someone's laptop, in a demo environment, with a fresh database and no edge cases, it works brilliantly.

Then you try to productionalise it.

Security review. Data classification. Identity and access management. Network architecture. Disaster recovery. Compliance frameworks. Service management wrappers. Deployment pipelines that meet enterprise policy. Monitoring, alerting, incident response. Change advisory boards. The quick PoC[poc] becomes a multi-year programme.

This isn't bureaucracy for its own sake (mostly). These requirements exist because enterprises operate at a scale and consequence level where "it works on my laptop" is the beginning of the conversation, not the end.

AI has made the gap worse, not better. The demos are so much more convincing now. A weekend prototype with an LLM[llm] can produce outputs that look indistinguishable from enterprise-grade solutions. Natural language makes everything feel accessible. "Look, the agent can do it" is the new "look, the lights are on."

Enterprise AI deployment faces every constraint that existed before AI, plus new ones. Model governance. Prompt injection[promptinjection]. Data residency for inference. Cost management at scale. Hallucination risk[hallucinationrisk] in regulated contexts. And the fundamental question of who is accountable when the agent gets it wrong.

These aren't solved by a better prompt. They're solved by the same unglamorous architectural work that's always separated prototypes from production systems.

Appearance tokens

This is where Dario's loss function metaphor keeps pulling me back.

A loss function tells the model what to optimise for. If it weights every token equally, the model gets very good at predicting common patterns, surface-level language, the stuff that appears most frequently. It produces text that looks right. But the rare tokens, the ones that carry the actual reasoning, the real programming logic, get drowned out by the noise of optimising for appearances.

I'm not an ML researcher, so I'm borrowing the metaphor loosely. But the parallel to how organisations adopt AI feels uncomfortably precise.

The visible tokens are easy to count: number of AI initiatives launched, agents deployed, tools procured, demos delivered, press releases issued. These are the high-frequency tokens of enterprise AI adoption. They appear in board decks and quarterly reviews. They look like progress.

The essential tokens are harder to spot: whether anyone actually understands how the models work, whether the architecture can support production workloads, whether the organisation has built the literacy to distinguish what AI handles reliably from what it doesn't, whether the deployment meets real security and governance requirements. These tokens are rare. They don't photograph well. They don't make good LinkedIn posts.

So the organisational loss function over-indexes on appearance. And the loss is real.

I should be honest about why this happens, though. Leaders don't optimise for appearance out of vanity. They do it because the board has read the McKinsey report[mckinsey] claiming AI will add trillions in value. The CEO came back from Davos demanding an AI strategy. Competitors, or at least their press releases, claim transformative deployments. In that environment, a leader who stands up and says "we're building literacy and doing the unglamorous work" risks looking like the person who doesn't get it. Appearance tokens aren't always vanity. Sometimes they're survival: the visible progress that buys you cover to do the real work underneath.

The skill isn't eliminating appearance tokens entirely. It's making sure they don't become the only thing you optimise for.

Electropathic belts and agent personas

While I was digging into the electricity history, I found something I wasn't expecting.

When electricity was new and poorly understood, an entire industry sprang up selling products that used electricity as a brand rather than a mechanism. Electropathic belts[electropathicbelts], marketed as cures for everything from rheumatism to "loss of virility," sold tens of thousands of units through the 1890s and appeared in the Sears catalogue. Thomas Edison's own son[edisonjr] sold a "Magno-Electric Vitalizer" claiming to cure paralysis and make people smarter. The US Patent Office rejected it twice as inoperable. Edison Sr. had to sue his own son to stop him using the family name.

The belts didn't work because they weren't actually using electricity in any meaningful way. Lord Kelvin[kelvin] testified in 1893 that one popular belt was "incapable of generating an electric current" at all. The product was the appearance of electricity, not the substance.

I look at parts of the AI industry and see electropathic belts. Agent frameworks where twenty-one named personas with backstories "debate" each other, producing outputs that look sophisticated but add nothing over a single well-structured prompt. "AI agents" that are rebranded workflow automations with a chat interface bolted on. Microsoft quietly renaming Power Automate[powerautomate] flows as "AI agents" after "Citizen Development" failed to gain traction. Same capability, new label.

The pattern is always the same. Optimise for what looks impressive. Ignore what's essential. Absorb the loss. I've written about this before: the persona theatre, the untested specifications, the imagination-first failure cycle. The branding changes but the dynamic doesn't.

Redesigning the factory

What struck me most about the electricity research was how rational the failure was.

The factories that gained nothing from electricity in the 1890s weren't stupid. They made a rational decision: take the new technology and fit it into the existing system. It was cheaper, faster, and less disruptive than rebuilding from scratch. And for thirty years, it produced mediocre results that probably seemed acceptable because everyone else was getting the same mediocre results.

The breakthrough came when manufacturers stopped using electricity as a faster version of steam and started redesigning from scratch. It wasn't the established factories that made the leap. They couldn't, because their buildings, workflows, and capital were all built around the old model. It was the new companies, starting fresh, that designed their operations around what electricity actually made possible.

The same choice is in front of every organisation adopting AI today, and this is where the startup world often gets it right. A team building an AI-native product from scratch, with no legacy architecture to accommodate, is doing exactly the factory-redesign work that the electricity era rewarded. They're not constrained by the steam-era layout. The best of them aren't just bolting an LLM onto an existing workflow: they're asking what the workflow looks like when AI is the starting point, not an addition.

For enterprises, the situation is more constrained. You can't tear down the factory and start from scratch. The governance, compliance, security requirements, they're there for good reason and they're not going anywhere. But within those constraints, there's still a difference between bolting an AI assistant onto an unchanged process and asking whether the process itself still makes sense. An "AI-powered" label on a product that works exactly the way it did before is still the electric motor dropped into the steam-era layout. It might produce small gains. But it won't produce the returns that justify the investment.

Or you can ask the harder question: what does our work look like when designed around what AI actually does well?

That question can't be answered from a conference keynote or a vendor demo. It can only be answered by people who understand the technology well enough to know its actual capabilities and constraints. Not what the demo showed. Not what the roadmap promises. What it does, today, reliably, in your specific context.

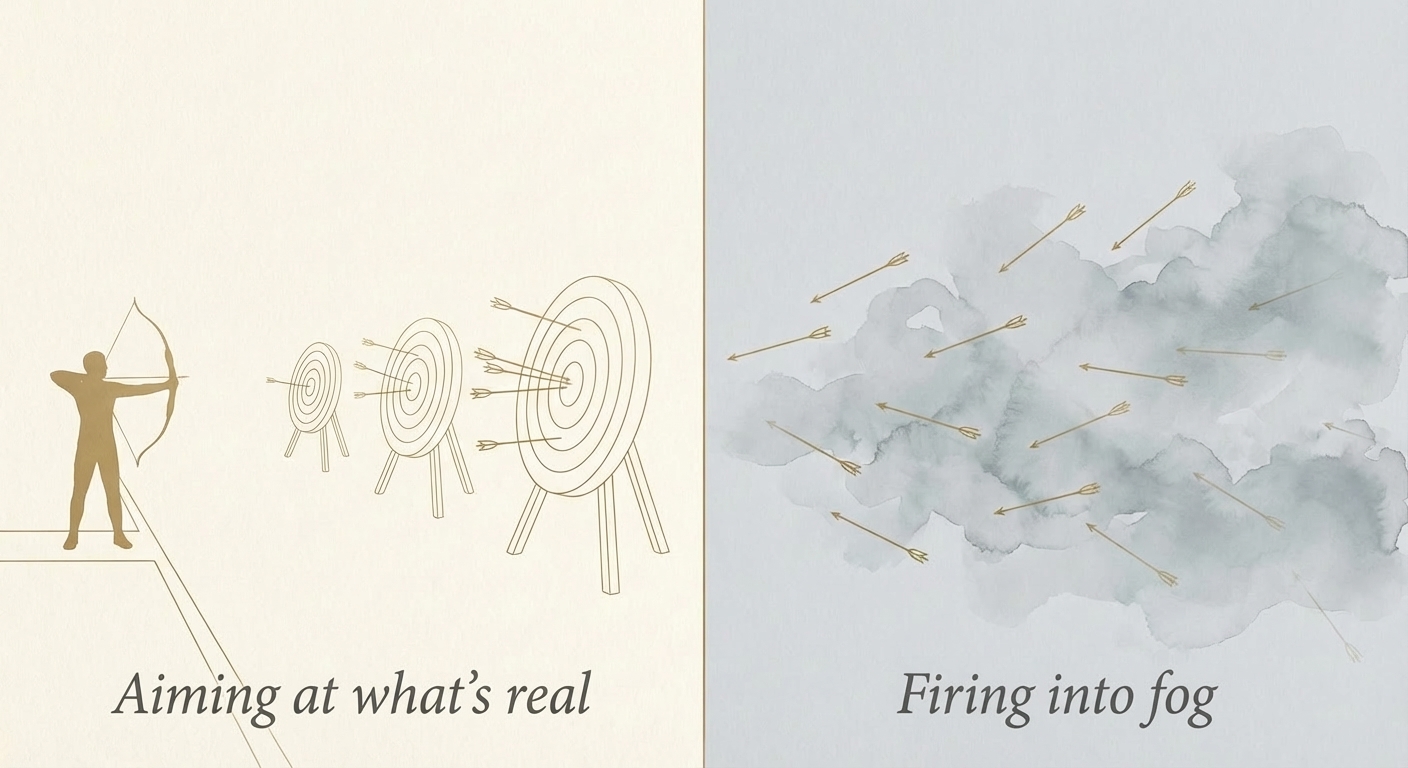

Think of it like archery. You can aim at a target you can see, or you can fire arrows into fog because someone told you there might be a target out there eventually. A lot of organisations are doing the second: building programmes around abstract ideas about where AI might be in two years, capabilities that don't reliably exist yet. When those bets don't pay off, they blame the technology. But the technology wasn't the problem. The target was never there.

Yes, AI capabilities are moving fast, and pure present-state planning has its own risks. But an organisation that trains against real targets today builds the muscle to hit moving targets tomorrow. That's a fundamentally different position from one that spent two years firing into fog and has nothing to show for it.

The organisations that succeed won't be the ones with the biggest AI budgets. They'll be the ones whose people genuinely understand what they're working with. Build the literacy first. Understand the engine before you redesign the factory.

The essential tokens

In February 2026, Fortune reported[fortune2026] that thousands of CEOs admitted AI had no measurable impact on productivity, explicitly invoking the Solow paradox[solowparadox]. The computer age, everywhere but the productivity statistics. The electricity age, everywhere but the factory output. Now the AI age, everywhere but the bottom line.

The pattern has a resolution, though. It just takes longer than anyone wants.

Electricity's productivity gains took thirty years to materialise, not because the technology was slow, but because the essential work, redesigning systems from the ground up, couldn't be shortcut. You couldn't demo your way to a unit-drive factory. You had to actually rebuild. AI will likely compress that timeline. Software iterates faster than concrete. But the essential work is the same kind of work, even if it takes years rather than decades.

I started this article because a passing comment on a podcast made me think about loss functions differently. Not as a technical concept in model training, but as a pattern I keep seeing in how organisations approach AI. The more I pulled on that thread, the more the electricity parallel kept reinforcing it: the spectacle, the snake oil, the thirty years of bolting new technology onto old structures before anyone thought to redesign from scratch.

None of this makes me an expert in ML theory or electricity history. But I've spent enough time watching organisations adopt technology to recognise the pattern: optimise for what's shiny and presentable on the surface, ignore what's essential, absorb the loss.

The organisations that will see real returns from AI are the ones doing the unglamorous work right now. Building genuine technical literacy across their teams. Understanding the real constraints of deployment. Designing their operations around what AI actually does rather than what it promises to do. Focusing on the rare, essential tokens instead of the common, visible ones.

It doesn't make for a compelling demo reel. But it's the only work that actually reduces the loss.

Co-founder and CEO of Anthropic, the AI safety company behind Claude. Previously VP of Research at OpenAI, where he led development of GPT-2 and GPT-3.

↩Podcaster known for The Dwarkesh Podcast, featuring long-form technical conversations with leading figures in AI, science, and history.

↩A mathematical formula that measures how far a model's predictions are from the correct answers. The model adjusts itself to minimise this number during training. Lower loss means better performance.

↩The core training method behind large language models. The model reads a sequence of text and learns to predict what comes next. By doing this billions of times across vast amounts of text, it develops broad language and reasoning abilities.

↩The small units of text that language models process. A token might be a whole word, part of a word, or a punctuation mark. "Rare tokens" here means the uncommon patterns that carry disproportionate meaning.

↩From "Dario Amodei (Anthropic CEO) - The hidden pattern behind every AI breakthrough," The Dwarkesh Podcast, August 2023. Transcript available here. The quote is lightly edited for readability.

↩The World's Columbian Exposition, held in Chicago from May to October 1893. Westinghouse and Tesla's alternating current system powered the fair's lighting, a decisive moment in the "War of Currents" against Edison's direct current.

↩Murat Halstead, "Electricity at the Fair," Cosmopolitan, September 1893. Halstead was one of the most prominent American journalists of the era.

↩Paul A. David, "The Dynamo and the Computer: An Historical Perspective on the Modern Productivity Paradox," American Economic Review, vol. 80, no. 2, May 1990. One of the most cited works in the economics of technological change.

↩Robert Solow, "We'd Better Watch Out," New York Times Book Review, July 12, 1987. Solow won the Nobel Prize in Economics that same year. The quote is often cited incorrectly.

↩A manufacturing approach where each machine has its own electric motor, replacing the older system where a single central engine powered everything through shafts and belts. This was the key innovation that unlocked electricity's productivity gains in the 1920s.

↩Proof of Concept. A small-scale experiment built to demonstrate that an idea or technology works in principle, before committing to full implementation.

↩Large Language Model. An AI system trained on enormous amounts of text that can generate, summarise, translate, and reason about language. GPT-4, Claude, and Gemini are examples.

↩A security vulnerability where a user crafts input that tricks an AI model into ignoring its instructions and doing something unintended. The AI equivalent of SQL injection in traditional software.

↩The tendency of AI language models to generate confident-sounding but factually incorrect information. In regulated industries, this is a serious liability because the output looks authoritative even when wrong.

↩McKinsey, "The Economic Potential of Generative AI," June 2023. Estimated $2.6 to $4.4 trillion annually in added value.

↩Fraudulent medical devices from the late 1800s that claimed to cure ailments using electricity but generated no meaningful current. They appeared in the Sears catalogue and sold tens of thousands of units before being debunked.

↩Thomas Edison Jr. repeatedly exploited the family name to sell fraudulent electrical medical devices. His father obtained a court injunction and paid Thomas Jr. $35 per week to use aliases instead.

↩William Thomson, 1st Baron Kelvin (1824-1907). One of the most prominent physicists of the Victorian era, known for the absolute temperature scale. His deposition debunking the Medical Battery Company's belt was dated 11 February 1893.

↩Microsoft's workflow automation product. In late 2024, Microsoft rebranded Power Virtual Agents to Copilot Studio and introduced "agent flows," while de-emphasising the "Citizen Development" terminology.

↩"AI Productivity Paradox," Fortune, February 2026. Based on an NBER survey of roughly 6,000 executives, approximately 90% of whom reported no measurable productivity impact from AI.

↩Named after economist Robert Solow's 1987 observation that computers were "everywhere but in the productivity statistics." Describes the recurring pattern where massive technology investment fails to show up in aggregate productivity numbers. Paul David's 1990 paper showed the same pattern occurred with electricity.

↩